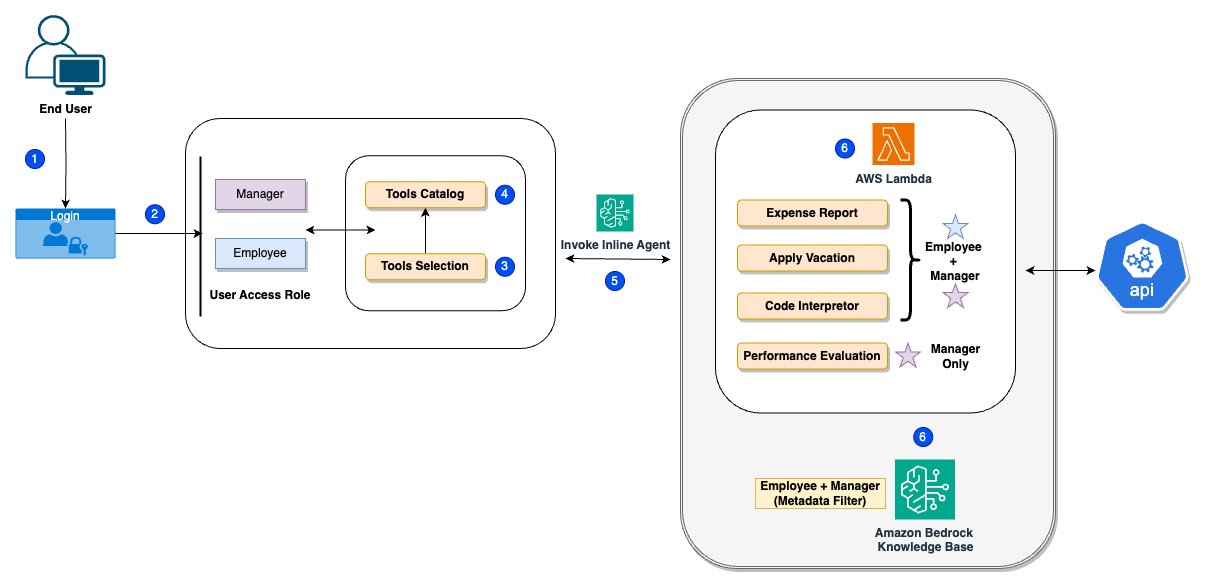

[ad_1] AI agents continue to gain momentum, as businesses use the power of generative AI to reinvent customer experiences and automate complex workflows. We are seeing Amazon Bedrock Agents applied in investment research, insurance claims processing, root cause analysis, advertising campaigns, and much more. Agents use the reasoning capability of foundation models (FMs) to break down user-requested tasks into multiple steps. They use developer-provided instructions to create an orchestration plan

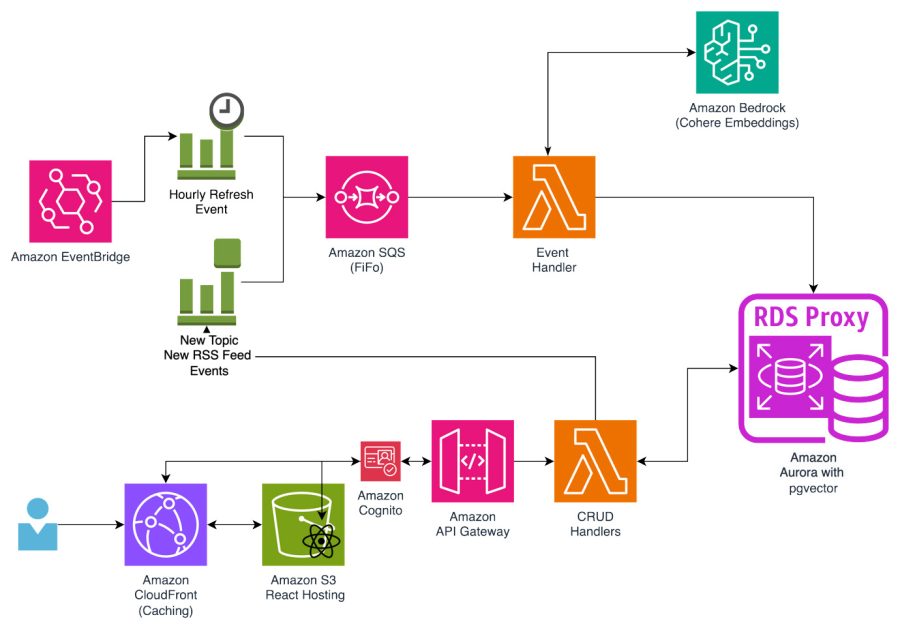

[ad_1] In this post, we discuss what embeddings are, show how to practically use language embeddings, and explore how to use them to add functionality such as zero-shot classification and semantic search. We then use Amazon Bedrock and language embeddings to add these features to a really simple syndication (RSS) aggregator application. Amazon Bedrock is a fully managed service that makes foundation models (FMs) from leading AI startups and Amazon

[ad_1] I have long felt confused about the question of whether brain-like AGI would be likely to scheme, given behaviorist rewards. …Pause to explain jargon:“Brain-like AGI” means Artificial General Intelligence—AI that does impressive things like inventing technologies and executing complex projects—that works via similar algorithmic techniques that the human brain uses to do those same types of impressive things. See Intro Series §1.3.2.I claim that brain-like AGI is a not-yet-invented variation

[ad_1] Tech companies, data center developers, and power utilities have been panicking over the prospect of runaway demand for electricity in the U.S. in the face of unprecedented growth in AI. Amidst all the hand wringing, a new paper published this week suggests the situation might not be so dire if data center operators and other heavy electricity users curtail their use ever so slightly. By limiting power drawn from

[ad_1]

At the recent Generative AI Summit in Toronto, I had the opportunity to sit down with Manav Gupta, Vice President and CTO, IBM Canada to explore the company’s current work in generative AI and explore their vision for the future. Here are the key insights from our conversation, highlighting IBM’s ecosystem leadership, industry impact, and strategies to navigate challenges in the generative AI landscape. IBM’s position in the

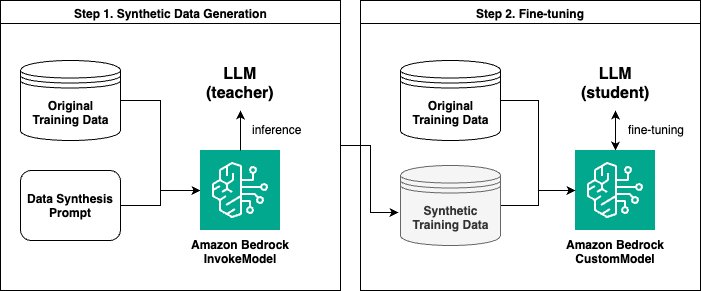

[ad_1] There’s a growing demand from customers to incorporate generative AI into their businesses. Many use cases involve using pre-trained large language models (LLMs) through approaches like Retrieval Augmented Generation (RAG). However, for advanced, domain-specific tasks or those requiring specific formats, model customization techniques such as fine-tuning are sometimes necessary. Amazon Bedrock provides you with the ability to customize leading foundation models (FMs) such as Anthropic’s Claude 3 Haiku and

[ad_1] This blog post is co-written with Moran Beladev, Manos Stergiadis, and Ilya Gusev from Booking.com. Large language models (LLMs) have revolutionized the field of natural language processing with their ability to understand and generate humanlike text. Trained on broad, generic datasets spanning a wide range of topics and domains, LLMs use their parametric knowledge to perform increasingly complex and versatile tasks across multiple business use cases. Furthermore, companies are

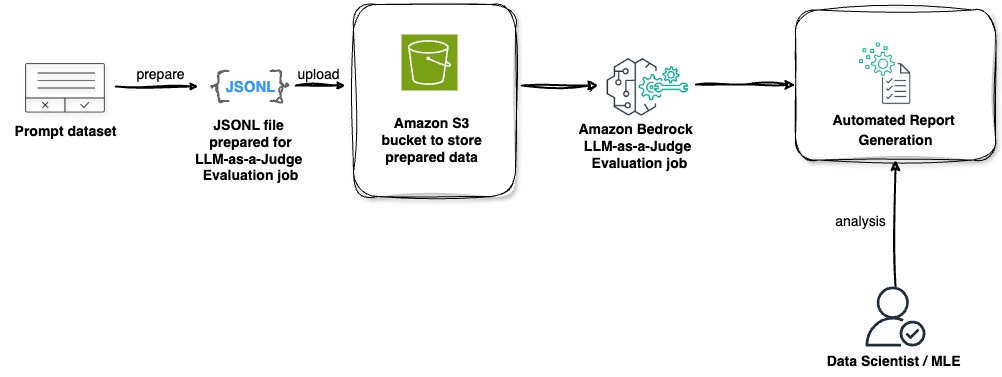

[ad_1] The evaluation of large language model (LLM) performance, particularly in response to a variety of prompts, is crucial for organizations aiming to harness the full potential of this rapidly evolving technology. The introduction of an LLM-as-a-judge framework represents a significant step forward in simplifying and streamlining the model evaluation process. This approach allows organizations to assess their AI models’ effectiveness using pre-defined metrics, making sure that the technology aligns

[ad_1] Generative AI has emerged as a transformative force, captivating industries with its potential to create, innovate, and solve complex problems. However, the journey from a proof of concept to a production-ready application comes with challenges and opportunities. Moving from proof of concept to production is about creating scalable, reliable, and impactful solutions that can drive business value and user satisfaction. One of the most promising developments in this space

[ad_1]

[embed]https://www.youtube.com/watch?v=wxLnHa-7qls[/embed] At the Artificial Intelligence (AI) Action Summit in Paris on February 11, President Ursula von der Leyen introduced InvestAI, a groundbreaking initiative to mobilize €200 billion for AI investment. Central to this effort is a €20 billion European fund dedicated to AI gigafactories—large-scale infrastructure designed to foster open, collaborative development of the most advanced AI models and position Europe as a global AI leader.President Ursula von der